🧠 AI Quiz

Think you really understand Artificial Intelligence?

Test yourself and see how well you know the world of AI.

Answer AI-related questions, compete with other users, and prove that

you’re among the best when it comes to AI knowledge.

Reach the top of our leaderboard.

flux 3

What is flux 3?

There are moments when you type a description—something very specific, like “a 35mm film still of a lone astronaut standing on a crimson dune under twin moons, soft rim light, subtle lens flare, Kodachrome grain”—and what comes back actually looks like it could hang in a gallery. That’s the quiet thrill this model delivers. It doesn’t just generate images; it renders them with a level of coherence, lighting logic, and text fidelity that still makes experienced artists pause. I’ve watched illustrators who normally spend hours refining Midjourney or SD outputs look at Flux results and simply say, “Okay, that’s different.” It feels like the gap between prompt and polished artwork just shrank dramatically.

Introduction

Text-to-image has come a long way, but most models still trade off somewhere: either beautiful but anatomically loose, or technically accurate but stylistically flat. Flux.3 refuses that compromise. It combines exceptional prompt adherence, near-perfect human anatomy, readable text in images, and a natural understanding of photographic and artistic lighting—all in a single forward pass. Early adopters started sharing side-by-sides and the pattern was clear: faces don’t warp, hands don’t melt, compositions hold together even when prompts get complex. For commercial illustrators, concept artists, product visualizers, and anyone who needs visuals that look intentionally crafted rather than “AI-ish,” this model quietly raised the bar.

Key Features

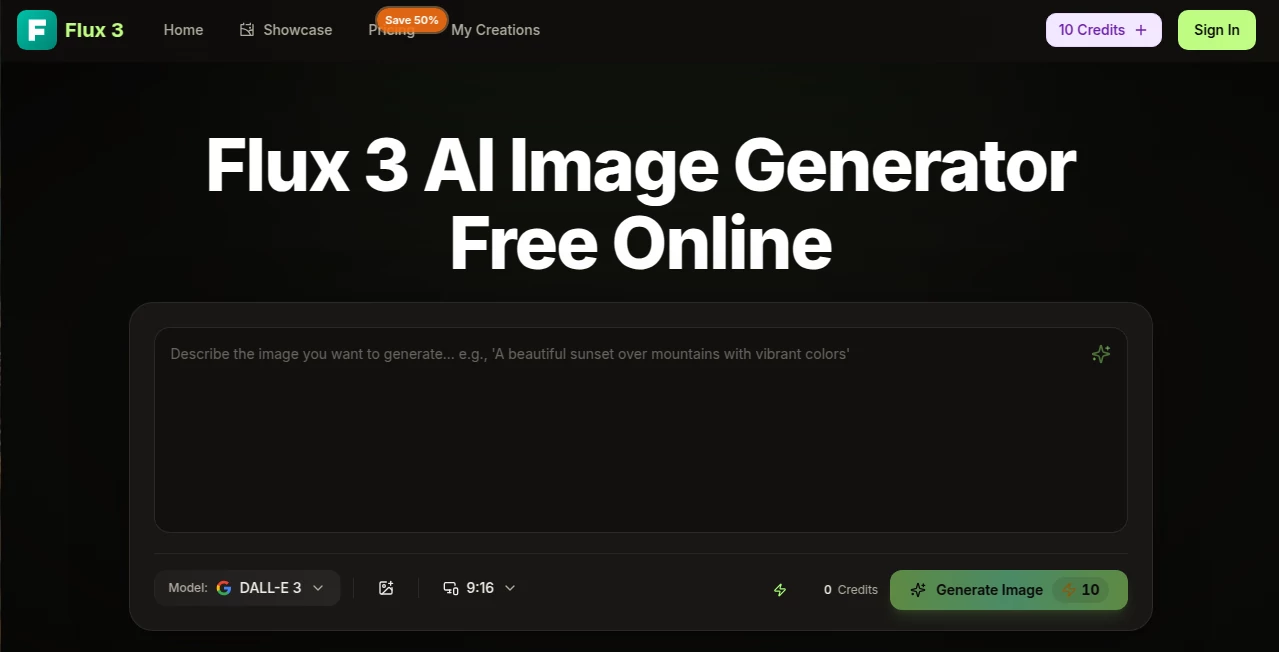

User Interface

Whether you’re using it through ComfyUI, Forge, or any of the hosted platforms, the experience stays focused. Prompt box, style/quality presets, optional negative prompt, aspect ratio presets, and a generate button—nothing extraneous. Advanced users drop in ControlNet, LoRAs, or IP-Adapter references without the workflow turning into spaghetti. It loads fast, previews quickly, and never feels like it’s fighting you. That simplicity at the surface hides serious engineering underneath.

Accuracy & Performance

Prompt adherence is where it really separates itself—multi-subject scenes stay coherent, spatial relationships make sense, text inside the image is legible (a notorious weak spot for most models), and anatomy holds up even in unusual poses or clothing. Generation times are competitive (often 10–30 seconds for 1024×1024 on decent hardware), and the [dev] and [pro] variants trade off speed for even higher fidelity. The consistency across prompts is remarkable; you don’t get wildly different quality from one generation to the next.

Capabilities

Exceptional human figure rendering (hands, faces, overlapping limbs), accurate text in images (logos, signs, book covers), strong understanding of photographic language (lens effects, film grain, depth-of-field), complex multi-character compositions, diverse art styles (realism, painterly, anime, retro), and robust handling of intricate prompts with many clauses. It supports inpainting/outpainting, ControlNet depth/pose/canny, IP-Adapter for style/character reference, and LoRA stacking. The results integrate cleanly into Photoshop, Blender, or production pipelines.

Security & Privacy

When run locally (via ComfyUI, Forge, SwarmUI, etc.) your prompts and outputs never leave your machine—zero cloud dependency. Hosted platforms vary, but the open-source nature means you can always choose fully offline workflows for sensitive commercial or personal work. No forced account linking or data harvesting baked into the model itself.

Use Cases

A concept artist generates clean hero character sheets with perfect anatomy and readable prop text for client pitch decks. A small brand creates lifestyle mockups and product hero shots without hiring a photographer. An indie game studio prototypes UI elements, icons, and environment textures with embedded text that actually reads. A book cover designer iterates dozens of compositions with accurate typography in minutes instead of days. A social media creator produces consistent character-driven content across posts without style drift. Wherever visual storytelling needs to look intentional and professional fast, it fits.

Pros and Cons

Pros:

- Best-in-class human anatomy and hand rendering—no more six-finger dread.

- Actually readable text inside images—logos, signs, book spines look intentional.

- Superb lighting and material understanding—renders feel physically plausible.

- Strong multi-subject coherence—crowds and interactions don’t collapse.

- Open-source weights mean you can run locally for privacy and unlimited use.

Cons:

- Heavier resource requirements than some smaller models (needs decent VRAM for full power).

- Generation times longer than lightweight alternatives on modest hardware.

- Requires a bit more prompt engineering for absolute edge cases compared to some tuned commercial models.

Pricing Plans

The core model weights are fully open and free to download and run locally—no subscription ever required. Hosted inference platforms (fal.ai, Replicate, Hugging Face, etc.) charge per image or per compute second—prices vary but generally stay affordable for occasional use. If you own a GPU with 12–24 GB VRAM, you can run unlimited generations offline after the initial download. For most serious users, the real cost is hardware or occasional hosted credits—not a recurring license fee.

How to Use Flux.3

Download weights from Hugging Face (dev or pro variant), install ComfyUI/Forge/SwarmUI, load the model, write a descriptive prompt (“cinematic portrait of a cyberpunk mechanic fixing a neon sign at night, wet streets, rim lighting, film grain, shot on Arri Alexa”), optionally add negative prompt and style/quality tags, set resolution/aspect, and generate. For maximum control use ControlNet depth or IP-Adapter reference images. Tweak CFG scale (usually 3.5–7) and steps (20–40) for best balance of speed and quality. Export and iterate. The learning curve is gentle if you’ve used any modern UI before.

Comparison with Similar Tools

Compared to earlier open models, it offers dramatically better anatomy, text, and prompt following. Against closed commercial APIs, it trades raw speed for unmatched local control, privacy, and zero per-image cost once you’re set up. Where some models produce beautiful but inconsistent outputs, Flux.3 leans toward coherence and realism without sacrificing style flexibility. For creators who value ownership, privacy, and long-term cost predictability, it often ends up the preferred choice.

Conclusion

Flux.3 doesn’t just make pictures—it makes believable scenes with intention and craft. It raises the floor for what “AI-generated” can mean: coherent, well-lit, anatomically sound, and prompt-faithful. For illustrators, game artists, advertisers, filmmakers, and anyone who needs visuals that look thoughtfully created rather than quickly computed, it’s become hard to ignore. When the difference between “good enough” and “actually impressive” shrinks to the cost of a decent GPU and a few minutes of prompt tweaking, something fundamental shifts in creative workflows. That shift is worth experiencing firsthand.

Frequently Asked Questions (FAQ)

Which variant should I use?

“dev” for fastest local inference with still excellent quality; “pro” for maximum detail and prompt adherence (needs more VRAM).

How much VRAM do I need?

12 GB minimum for comfortable 1024×1024; 16–24 GB ideal for higher res or faster batches.

Can I run it commercially?

Yes—the weights are open and commercially usable (Apache 2.0 license).

Does it do inpainting/outpainting natively?

Yes—via standard ComfyUI/Forge nodes; works very well with ControlNet tile or IP-Adapter.

Is text inside images reliable?

Among the best of any open model—logos, signs, book covers, UI elements usually come out legible and correctly spelled.

AI Photo & Image Generator , AI Art Generator , AI Design Generator , AI Text to Image .

These classifications represent its core capabilities and areas of application. For related tools, explore the linked categories above.

flux 3 details

Pricing

- Free

Apps

- Web Tools