🧠 AI Quiz

Think you really understand Artificial Intelligence?

Test yourself and see how well you know the world of AI.

Answer AI-related questions, compete with other users, and prove that

you’re among the best when it comes to AI knowledge.

Reach the top of our leaderboard.

Seedacne 2

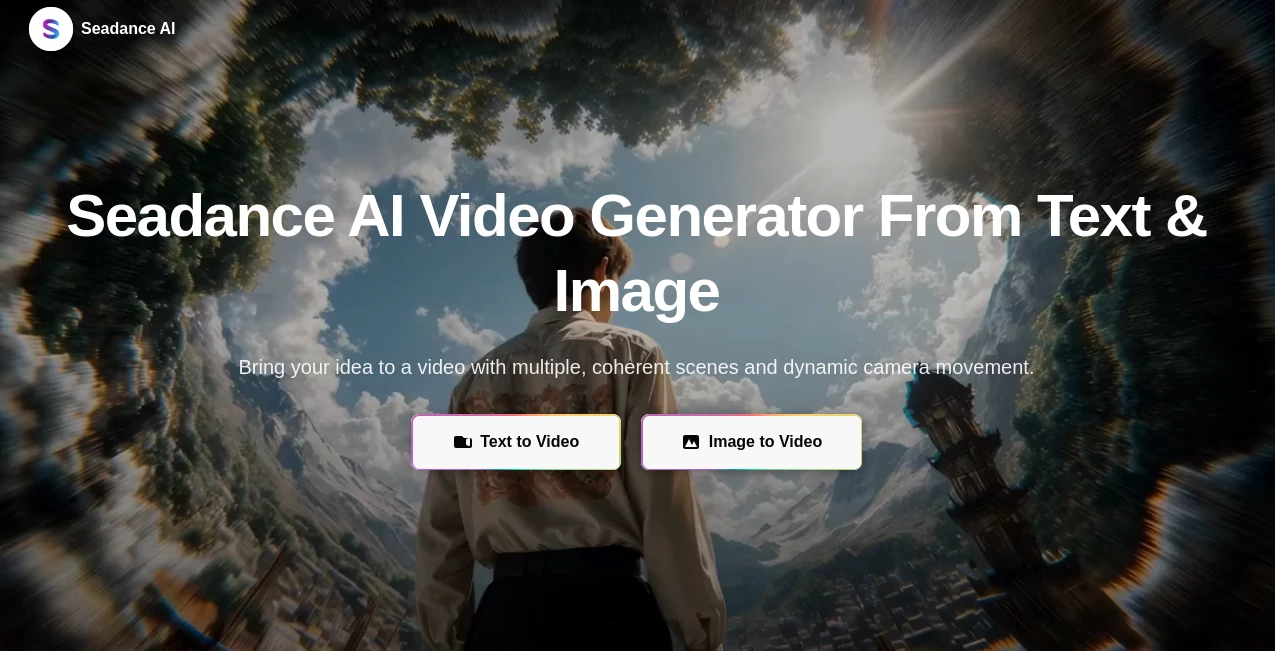

What is Seedacne 2?

There’s a moment when you type a simple scene description—“golden hour, lone figure walking along a misty pier, slow camera push-in”—and seconds later a clip plays that actually feels like it was directed with intention. Lighting falls naturally, motion has weight, and the mood carries through every frame. That’s the quiet thrill this tool delivers. I’ve shown clips to friends who normally dismiss AI video as “cool but glitchy,” and watched their faces change when they realized there were no obvious artifacts, no melting hands, just a coherent little story that looked thoughtfully made. It’s the kind of result that makes you want to keep creating, not because the tech is flashy, but because it finally feels like a real creative partner.

Introduction

Video is still one of the most demanding mediums—storyboarding, shooting, editing, color grading, sound sync. Most AI tools cut corners and leave visible seams. This one quietly refuses to compromise on cinematic quality. Whether you start with text, a single reference image, or both, it builds short sequences that understand pacing, emotion, and visual continuity. Early users started sharing side-by-sides—raw prompt vs final clip—and the leap from static idea to living scene keeps surprising people. For creators who think in motion but don’t have a full production crew, it’s less a gimmick and more a genuine shortcut to something watchable and shareable right away.

Key Features

User Interface

The workspace is calm and focused. A generous prompt box, optional image upload, simple toggles for aspect ratio and length, and one clear generate button. No twenty nested menus or cryptic icons. Previews arrive fast enough that you can iterate without losing momentum. It’s designed so you spend time shaping your vision, not wrestling controls. Beginners finish their first clip in under two minutes; experienced creators appreciate how little friction there is between idea and output.

Accuracy & Performance

Characters remain recognizable across angles and lighting changes. Motion follows natural physics—cloth ripples, hair catches wind, objects interact realistically. Complex prompts with multiple subjects and camera moves rarely break coherence. Generation times stay reasonable (often 20–60 seconds), and the model avoids the usual uncanny jitter or impossible jumps. When it does misinterpret, the mistake is usually traceable to the prompt rather than random failure. That reliability lets you trust the output and focus on creativity.

Capabilities

Text-to-video, image-to-video, hybrid guidance (image + text), multi-shot narrative flow, native audio sync for dialogue and effects, and support for multiple aspect ratios. You can guide with references—photos for style, clips for motion, audio for timing—and the result weaves them into a coherent sequence. It’s strong on emotional beats, subtle camera language (push-ins, gentle pans, motivated zooms), and keeping visual continuity across cuts, making it feel closer to real filmmaking than most AI video attempts.

Security & Privacy

Inputs are processed temporarily—nothing is retained for training or sold later. No mandatory account linking for basic use. For creators handling client concepts, personal projects, or brand-sensitive content, that clean separation provides real reassurance.

Use Cases

A small brand turns one hero product photo into an elegant 8-second lifestyle clip that outperforms their previous live-action ads. An indie musician creates a visualizer that matches the song’s emotional arc instead of generic loops. A short-form creator builds consistent character-driven Reels without daily filming. A filmmaker sketches key story moments to test tone before full production. The common thread is speed plus quality—getting something watchable and shareable without weeks of work.

Pros and Cons

Pros:

- Outstanding character and style consistency across shots—rare at this quality level.

- Cinematic motion and lighting decisions that feel human-directed.

- Strong hybrid mode (image + text) gives precise creative control.

- Generation speed that supports real creative iteration.

Cons:

- Clip length caps at around 5–10 seconds (though multi-shot workflows extend storytelling).

- Very abstract or contradictory prompts can still confuse it.

- Higher resolutions and priority queues live behind paid access.

Pricing Plans

A meaningful free daily quota lets anyone experience the quality firsthand—no card required to start. Paid plans unlock higher resolutions, longer clips, faster queues, and unlimited generations. Pricing stays reasonable for the leap in output fidelity; many creators find that one month covers what they used to spend on freelance editors or stock footage for a campaign.

How to Use SeaDance AI

Open the generator, write a concise scene description (“late-afternoon loft, young woman in linen shirt pours coffee, soft smile, slow camera push-in”). Optionally upload a reference image for stronger visual grounding (highly recommended for character consistency). Select aspect ratio (vertical for social, horizontal for trailers) and duration. Press generate. Watch the preview—adjust wording or reference strength if the feel isn’t quite right—then download or create variations. For longer narratives, generate individual shots and stitch them in your editor. The loop is fast enough to refine several versions in one sitting.

Comparison with Similar Tools

Many models still produce visible inconsistencies, odd physics, or lighting jumps between frames. This one prioritizes narrative flow and cinematic intent, often delivering clips that feel closer to human-directed work. The hybrid input mode stands out—letting you steer with text, images, and audio together gives more director-like control than most alternatives offer.

Conclusion

Video creation has always been expensive in time, money, or both. Tools like this quietly lower that barrier so more people can tell visual stories without compromise. It doesn’t replace human taste or vision—it amplifies them. When the distance between an idea in your head and a watchable clip shrinks to minutes, something fundamental shifts. For anyone who thinks in moving pictures, that shift is worth experiencing.

Frequently Asked Questions (FAQ)

How long can generated clips be?

Typically 5–10 seconds per generation; longer storytelling is possible by combining multiple shots.

Is a reference image required?

No—text-only works well—but adding one dramatically improves consistency.

What resolutions are supported?

Up to 1080p on paid plans; free tier offers preview-quality.

Can I use outputs commercially?

Yes—paid plans include full commercial rights.

Is there a watermark on free generations?

Small watermark on free clips; paid removes it completely.

AI Animated Video , AI Image to Video , AI Video Generator , AI Text to Video .

These classifications represent its core capabilities and areas of application. For related tools, explore the linked categories above.

Seedacne 2 details

This tool is no longer available on submitaitools.org; find alternatives on Alternative to Seedacne 2.

Pricing

- Free

Apps

- Web Tools