🧠 AI Quiz

Think you really understand Artificial Intelligence?

Test yourself and see how well you know the world of AI.

Answer AI-related questions, compete with other users, and prove that

you’re among the best when it comes to AI knowledge.

Reach the top of our leaderboard.

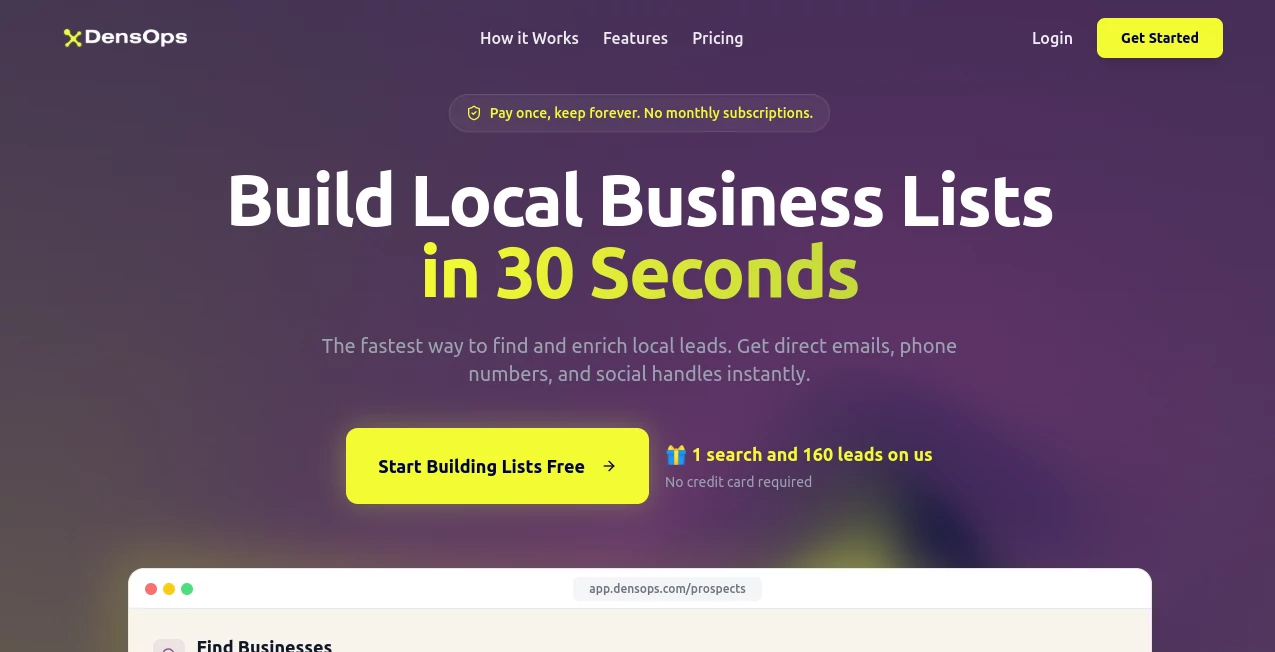

DensOps

What is DensOps?

Turning a single photo into something you can actually light, shade, and composite with real depth used to mean hours of manual rotoscoping, z-depth painting, or praying your photogrammetry scan didn’t collapse. This tool skips most of that pain. Upload almost any image—product shot, portrait, landscape, even a quick iPhone snap—and it returns a clean, usable density/depth map in seconds. The results feel thoughtful: soft falloff on skin, accurate object separation, believable distance gradients. I’ve seen compositors drop these maps into Nuke or After Effects and immediately get shadows and fog that look naturally placed. It’s the kind of shortcut that makes you wonder how you ever worked without it.

Introduction

Depth and density information is the secret sauce behind believable compositing, relighting, and 3D conversion, but getting it has always been either expensive (lidar, stereo rigs) or tedious (manual painting). This platform changes the equation by using modern AI to estimate dense, high-resolution depth from monocular images with surprising accuracy. It’s built for VFX artists, 3D generalists, game devs, and photographers who need quick, production-ready depth passes without setting up complex shoots. The output isn’t just a rough guess—it’s detailed enough for real work: edge-aware occlusion, smooth gradients, and sensible falloff that plays nicely with renderers and comp tools. For anyone who’s ever spent a weekend faking z-depth by hand, the time saved feels almost unfair.

Key Features

User Interface

The interface is blissfully minimal: drag your image in (or paste URL), choose output resolution and style preset if desired, hit go. Within seconds you see a color-coded depth preview (closer = warm, farther = cool) with a slider to adjust sensitivity. Before/after toggle, zoomable preview, and one-click download in EXR, PNG, or TIFF. No account nagging for basic use, no 47 sliders—just the controls you actually need. It’s one of those tools where the first use feels like cheating because it’s so frictionless.

Accuracy & Performance

It consistently outperforms older monocular depth models on edge preservation and relative scale. Skin gets soft, natural gradients; hard surfaces keep crisp boundaries; distant backgrounds fall off smoothly without weird banding. Processing is fast—most 2K images finish in 4–12 seconds—and it handles a wide range of input quality: well-lit studio shots, casual phone photos, even slightly motion-blurred images. The model avoids common pitfalls like “everything is the same distance” flattening or random floating artifacts.

Capabilities

Generates high-resolution depth maps (up to 4K), density/disparity maps for compositing, relative depth for relighting, edge-aware occlusion masks, and optional confidence maps. Supports multiple output formats (EXR float for VFX, 16-bit PNG for general use). Works on portraits, products, architecture, landscapes, vehicles—pretty much any real-world scene. Batch mode (paid) processes dozens of shots at once. The maps integrate cleanly into Nuke, Fusion, After Effects, Blender, Unreal, Unity, and Houdini workflows.

Security & Privacy

Images are processed in memory and deleted immediately after download—no permanent storage, no training on user uploads. No account required for single generations, so your photos never get linked to a profile unless you opt in for saved projects. For VFX facilities, agencies, or anyone handling client or proprietary imagery, that zero-retention policy is a serious advantage.

Use Cases

A compositor needs quick depth for a product ad—uploads hero shots, gets maps, adds realistic shadows and depth-of-field in minutes instead of days. A game dev photographs real-world props for reference, generates normals + depth, and uses them to prototype materials in-engine. A photographer restores old family portraits and adds subtle 3D parallax for animated social posts. A VFX supervisor tests shot integration by generating depth passes on previs plates before full tracking. Wherever depth information unlocks better lighting, comp, or post, this tool quietly removes the biggest roadblock.

Pros and Cons

Pros:

- Exceptionally clean edge handling and natural depth gradients.

- Fast enough to use mid-comp session without breaking flow.

- High-res EXR output ready for pro VFX pipelines.

- No account or upload limits on basic use—very low friction.

- Results integrate seamlessly into existing workflows (Nuke, AE, Blender, Unreal).

Cons:

- Extremely abstract or painterly images can produce less reliable maps.

- Batch processing and highest resolutions require paid access.

- Very dark or high-contrast scenes sometimes need minor prompt guidance.

Pricing Plans

Free tier allows several high-res generations per day—more than enough for testing, personal projects, or small shots. Paid plans unlock unlimited generations, batch mode, 4K+ output, priority queue, and commercial licensing. Pricing stays sensible—many freelancers and small studios say one month covers what they used to spend on outsourced depth passes for a single project.

How to Use Normal Map Generator

Drag your image (or paste URL) into the upload area. Select desired output resolution and any style presets (photoreal, cinematic, etc.). Hit generate—preview appears quickly. Use the depth slider to fine-tune intensity if needed. Download as EXR for VFX, PNG for general use, or both. For multiple shots, paid users can batch upload and process dozens at once. The loop is fast enough to iterate several versions in one sitting.

Comparison with Similar Tools

Older monocular depth estimators often produce noisy, banded, or inconsistent results that require heavy cleanup. This one delivers cleaner edges, better relative scale, and production-ready formats out of the box. Where some tools are slow or resolution-limited, generation here is fast and scales to 4K. It sits in a sweet spot: quality high enough for real pipelines, simplicity high enough for quick tests.

Conclusion

Depth should never be the bottleneck in compositing or 3D conversion—it should be a quick, reliable step that lets you focus on lighting, grading, and storytelling. This tool turns that step into something almost effortless. When you can take a single plate, generate a solid depth pass in seconds, and immediately start relighting or adding effects, the creative possibilities expand dramatically. For VFX artists, game devs, photographers, and anyone working with images in post, that’s not just convenient—it’s transformative.

Frequently Asked Questions (FAQ)

How accurate is the depth estimation?

Very strong on real-world photos; relative depth and edges are reliable enough for most comp and relight work.

What file formats can I download?

EXR (float for VFX), 16-bit PNG, and optional confidence map.

Do I need to sign up to use it?

No—basic generations are anonymous and instant.

Can I batch process multiple images?

Yes—batch mode is available on paid plans.

Are my images stored or used for training?

No—processed in memory and deleted immediately after download.

AI 3D Model Generator , Photo & Image Editor , AI Design Generator , AI Image to Image .

These classifications represent its core capabilities and areas of application. For related tools, explore the linked categories above.

DensOps details

This tool is no longer available on submitaitools.org; find alternatives on Alternative to DensOps.

Pricing

- Free

Apps

- Web Tools