🧠 AI Quiz

Think you really understand Artificial Intelligence?

Test yourself and see how well you know the world of AI.

Answer AI-related questions, compete with other users, and prove that

you’re among the best when it comes to AI knowledge.

Reach the top of our leaderboard.

kling 3

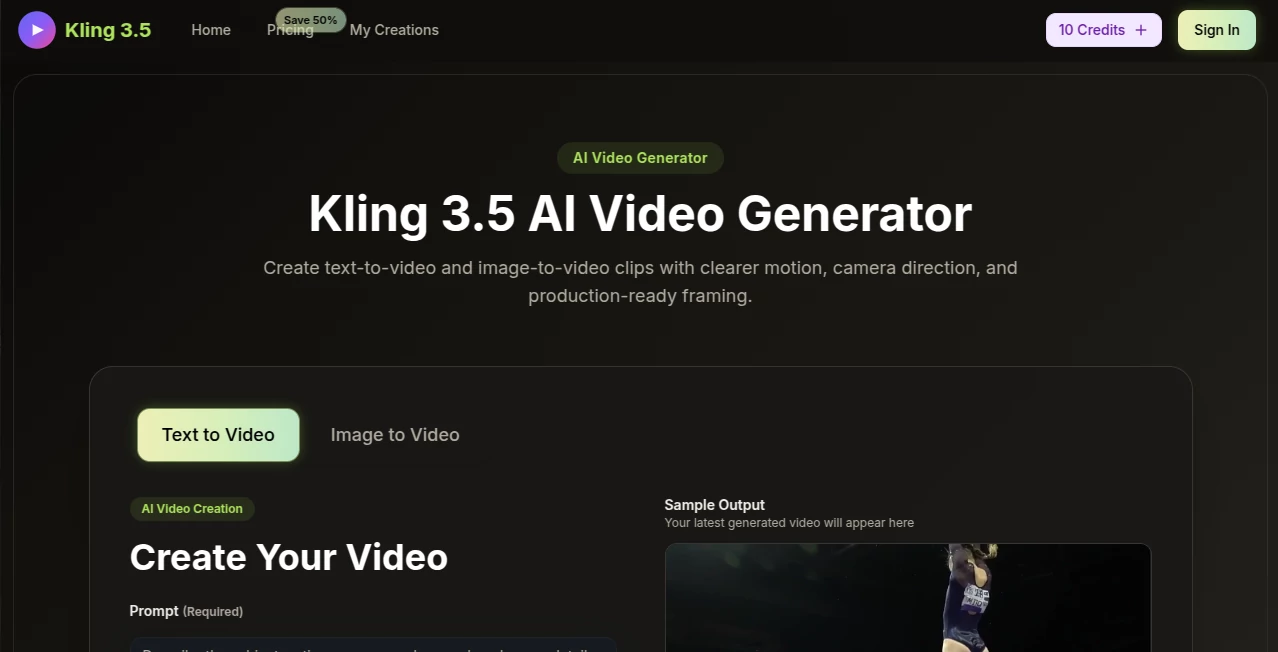

What is kling 3?

You know that feeling when you have a brilliant video idea stuck in your head, but bringing it to life feels like climbing a mountain? You either need expensive equipment, a full production team, or weeks of editing. That's exactly where this tool steps in to change the game.

After spending countless hours testing various AI video generators, I can honestly say this one hits different. It doesn't just stitch together random visuals based on your prompt. It actually understands how things move in the real world. The way fabric flows, how light bounces off surfaces, or the subtle expressions on a character's face — it gets all those tiny details right . What started as an experimental platform has now matured into something genuinely useful for creators, marketers, and anyone who needs quality video content without the usual headaches.

Key Features

Let me walk you through what makes this platform stand out from the crowded AI video space. I've broken down the most important aspects based on real usage, not just marketing claims.

User Interface

The first thing you'll notice is how clean everything looks. No overwhelming dashboards, no buried menus. You simply land on the page, and there's a text box waiting for you. Type what you want to see, hit generate, and that's it. For someone like me who hates wrestling with complicated software, this simplicity is a blessing.

What I especially appreciate is the Prompt Enhancer feature. Let's be honest — not everyone knows how to write perfect technical prompts. This built-in assistant helps refine your descriptions so the output actually matches what you imagined . You can also use negative prompts to exclude things you don't want, which saves a ton of time on re-generations. The whole experience feels designed by people who actually understand how creators work.

Accuracy & Performance

Here's where things get really interesting. The v1.6 update brought a massive 195% quality jump over previous versions . That's not just marketing fluff — you can see the difference immediately. Characters maintain their appearance even during complex movements, which is something many competitors still struggle with.

I tested it with a prompt describing a martial artist throwing a punch while air swirls behind them like a dragon. The result genuinely surprised me. The motion had weight and momentum, not that floaty, dreamlike quality you often see in AI videos . Camera movements like pans and zooms execute smoothly, and lighting behaves naturally with proper shadows and reflections. For 5 to 10-second clips, the consistency is remarkable.

Capabilities

This platform supports both text-to-video and image-to-video generation, giving you flexibility depending on your starting point. You can choose from three aspect ratios — 16:9 for YouTube, 9:16 for TikTok and Instagram Stories, or 1:1 for standard social posts . Guidance scale adjustment lets you control how strictly the output follows your prompt. Want more creative freedom? Lower it. Need precise adherence? Crank it up.

The model shows impressive range across different genres. Whether you're creating fantasy scenes, product demonstrations, music visualizers, or cinematic storytelling, it adapts well. Some examples I've seen include everything from "golden-hour street markets with drifting dust motes" to complex action sequences . For rapid iteration on social content, it's particularly well-suited.

Security & Privacy

When working with original content, privacy matters. The platform maintains standard data protection practices for generated videos. While exact long-term storage policies vary depending on your access method (web app vs. API), the service includes watermarking on free outputs and moderation systems . For teams requiring enterprise-level governance, API access through providers like Vertex AI offers additional controls . My advice? Always review current terms before uploading sensitive reference material, but overall, the security posture is comparable to other major players in this space.

Use Cases

Different people will find different value here. Let me share some scenarios where this tool really shines.

- Social Media Creators: Generate vertical clips for TikTok, Reels, and Shorts without hiring a video editor. The 9:16 aspect ratio option is perfect for mobile-first content.

- Marketing Teams: Produce product demos and ad creatives on tight deadlines. One example: rotating a leather bag smoothly to reveal stitching details from every angle .

- Filmmakers & Animators: Use it for pre-visualization, concept testing, or even final elements in short films. The camera control and physics simulation are robust enough for professional workflows.

- Educators: Create engaging instructional videos with dynamic visuals that keep students' attention without complex animation software.

Pros and Cons

No tool is perfect. Here's my honest take on what works well and what could be better.

What Works Well: The motion realism is genuinely impressive — human movements, facial expressions, and object physics feel natural, not robotic. The interface keeps things simple, so you're not fighting the software. Flexible aspect ratios mean one prompt can output content for multiple platforms. The prompt enhancer helps beginners get better results without deep technical knowledge. And compared to hiring videographers or buying equipment, the cost is minimal.

What Could Improve: Each generation typically runs 5-10 seconds, so longer narratives require stitching clips together. The free tier works for testing, but serious work needs a paid plan. Native audio generation exists in some versions but isn't fully documented across all access points . And while quality is high, occasional artifacts still appear with very complex prompts — though much less than earlier versions.

Pricing Plans

Understanding the cost structure requires separating the web app from API access. For casual users, a free tier exists with limited credits, slower queues, and watermarked outputs . This is fine for testing prompts and getting familiar with the platform.

Paid memberships are credit-based. As of early 2026, the official Kling VIDEO 3.0 pricing model uses credits per second: 720p generation costs 6 credits per second without native audio, or 9 credits per second with audio enabled. 1080p runs 8 to 12 credits per second depending on audio . Specific monthly prices for Standard, Pro, or higher tiers vary by region and promotions, so check the live billing page before purchasing.

For developers and high-volume users, pay-as-you-go API routes offer another option — sometimes starting around $0.075 per second depending on the specific model and provider . This can be more predictable for production workflows than subscription bundles.

How to Use This Video Generator

Getting started takes about two minutes. First, write your prompt describing the subject, action, camera angle, mood, and scene details. Be specific — "golden hour" works better than "nice lighting." Use the Prompt Enhancer if you want AI-assisted optimization. Then select your aspect ratio: 16:9 for widescreen, 9:16 for mobile, or 1:1 for social squares. Add a negative prompt if there are elements you want to avoid, like "blurry" or "distorted face." Adjust the guidance scale — lower values (0.3–0.5) give more natural results, higher values stick closer to your prompt. Set your duration (5 or 10 seconds). Click generate and wait. Once finished, preview and download. That's the whole workflow .

A few pro tips: Include style keywords like "cinematic," "magical realism," or "documentary." Describe not just what happens but how it happens — "dust motes drift with backlight" adds life to a scene. For character consistency, reference the same subject across prompts. And don't be afraid to regenerate; sometimes small wording changes make huge differences.

Comparison with Similar Tools

How does this stack against alternatives like Runway, Pika, or Sora? Runway Gen-3/Gen-4 sets the professional standard with 4K output and motion brush controls, but it's subscription-only ($12-$76/month) and caps clips around 10-60 seconds depending on the version . It's web-first, so API access feels secondary. Pika Labs is faster and cheaper ($10-$35/month) but produces lower quality ceilings — fine for rapid social iteration but not cinematic work . Sora 2 from OpenAI offers impressive physics and synchronized audio, but access remains invite-only as of late 2025/early 2026 . Google's Veo 3 provides enterprise governance through Vertex AI with 8-second clips, but it's developer-focused rather than creator-friendly .

This platform hits a sweet spot: cinematic motion realism comparable to the best in class, flexible aspect ratios, both web app and API access, and more reasonable pricing for medium-volume work. Where it leads is in understanding movement — human actions, camera motion, and physical interactions feel noticeably more natural than Pika or basic Runway tiers .

Conclusion

After spending real time with this tool, I can say it delivers on its core promise: making high-quality video generation accessible without sacrificing realism. The interface welcomes beginners while the output quality satisfies professionals. Motion handling leads the category, the flexibility across aspect ratios covers every major platform, and the pricing structure offers reasonable entry points whether you're testing ideas or scaling production.

No, it won't replace a full film crew for a feature movie. But for social content, marketing materials, concept visualization, and short-form storytelling, it's genuinely useful right now. The free tier lets you validate that for yourself without risk. If you're tired of fighting complex software or expensive production costs, give it a shot. Sometimes the right tool isn't the most famous one — it's the one that actually works when you need it.

Frequently Asked Questions (FAQ)

Is there a free version? Yes, a free web tier exists with limited credits, slower generation queues, and watermarked outputs. It's great for testing prompts and learning the platform.

How long are generated videos? Standard generations run 5 or 10 seconds per clip. For longer content, you can generate multiple clips and stitch them together.

Can I use my own images as references? Yes, image-to-video generation is supported alongside text-to-video. Upload a reference image, and the model will animate it based on your prompt .

What resolutions are available? Up to 1080p at 30 frames per second. Some providers offer upscaling options, but 1080p is the standard native output .

Is there an API for developers? Yes, API access exists for programmatic generation. Pricing and features differ from the web app, so check developer documentation for current rates.

Do I own the videos I create? Commercial rights depend on your plan tier. Free and lower-tier outputs may include watermarks or usage restrictions. Paid plans generally provide broader commercial rights — verify specific terms on the official site.

How does this compare to Runway or Pika? This platform excels at motion realism and camera control, often surpassing Pika's quality while being more accessible than Runway's professional tiers. Each tool has strengths, but for natural movement and physics, this leads the category .

AI Content Generator , AI Productivity Tools , AI Short Clips Generator .

These classifications represent its core capabilities and areas of application. For related tools, explore the linked categories above.

kling 3 details

Pricing

- Free

Apps

- Web Tools